In this article, you will learn how to design, implement, and evaluate memory systems that make agentic AI applications more reliable, personalized, and effective over time.

Topics we will cover include:

- Why memory should be treated as a systems design problem rather than just a larger-context-model problem.

- The main memory types used in agentic systems and how they map to practical architecture choices.

- How to retrieve, manage, and evaluate memory in production without polluting the context window.

Let’s not waste any more time.

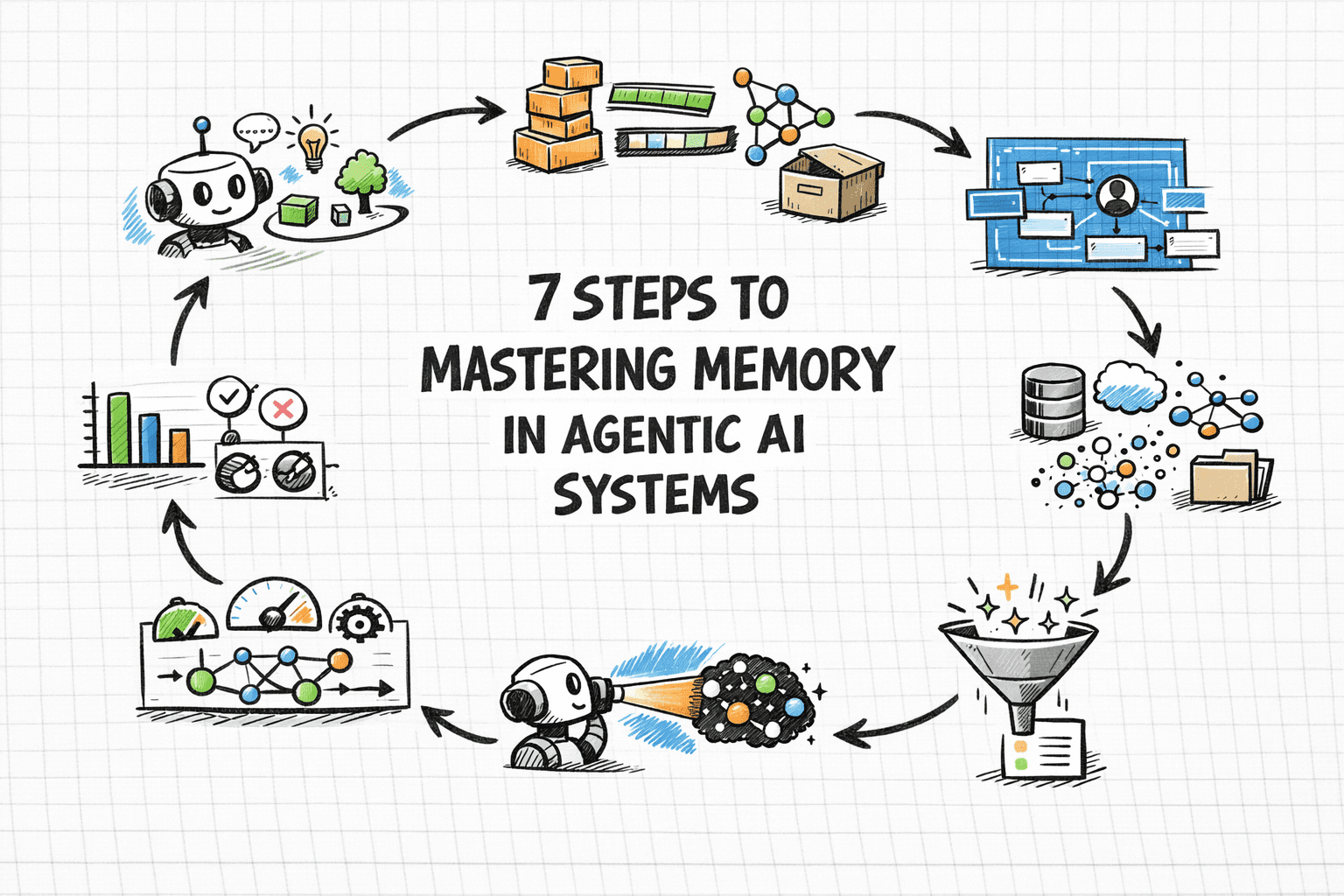

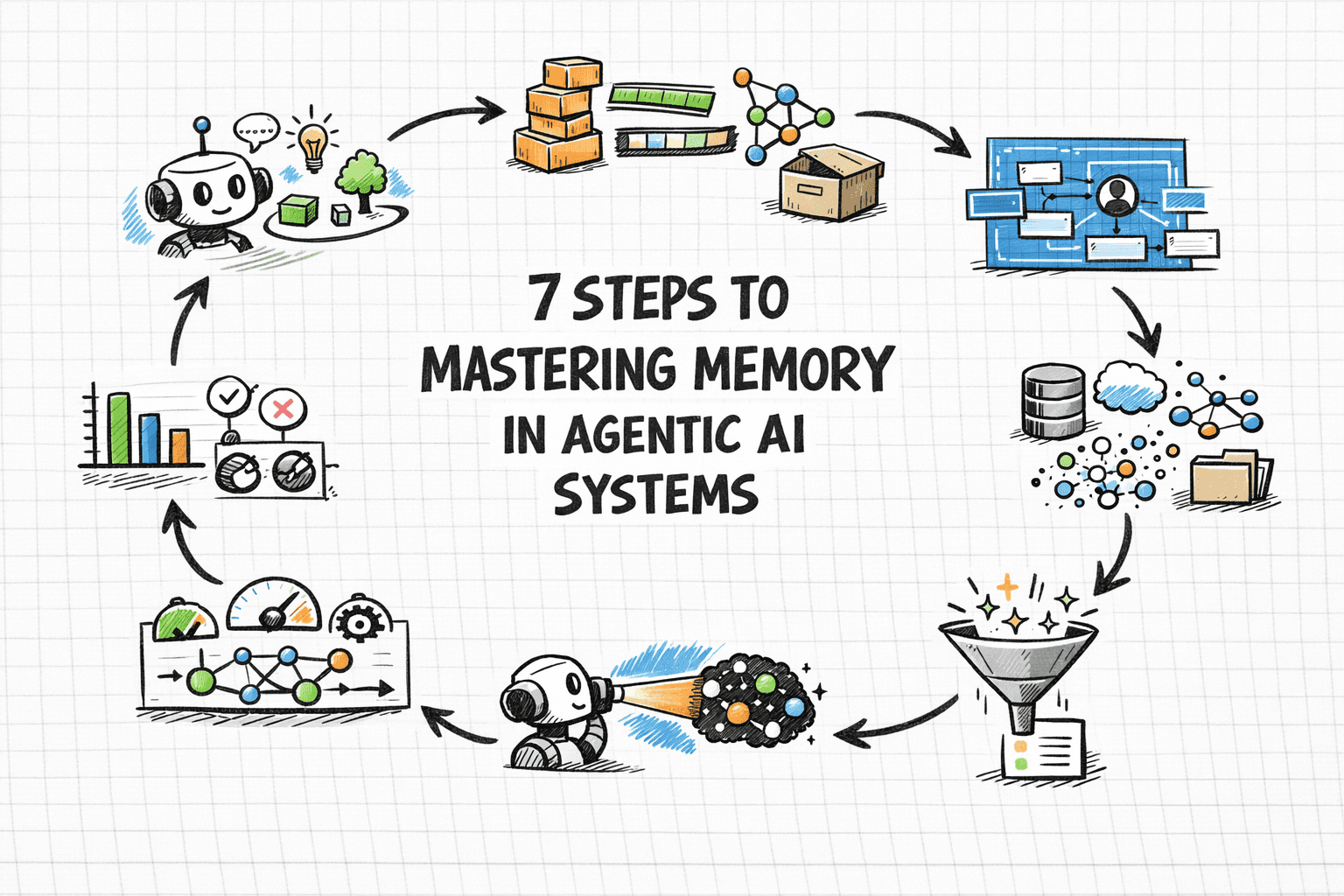

7 Steps to Mastering Memory in Agentic AI Systems

Image by Editor

Introduction

Memory is one of the most overlooked parts of agentic system design. Without memory, every agent run starts from zero — with no knowledge of prior sessions, no recollection of user preferences, and no awareness of what was tried and failed an hour ago. For simple single-turn tasks, this is fine, but for agents running and coordinating multi-step workflows, or serving users repeatedly over time, statelessness becomes a hard ceiling on what the system can actually do.

Memory lets agents accumulate context across sessions, personalize responses over time, avoid repeating work, and build on prior outcomes rather than starting fresh every time. The challenge is that agent memory isn’t a single thing. Most production agents need short-term context for coherent conversation, long-term storage for learned preferences, and retrieval mechanisms for surfacing relevant memories.

This article covers seven practical steps for implementing effective memory in agentic systems. It explains how to understand the memory types your architecture needs, choose the right storage backends, write and retrieve memories correctly, and evaluate your memory layer in production.

Step 1: Understanding Why Memory Is a Systems Problem

Before touching any code, you need to reframe how you think about memory. The instinct for many developers is to assume that using a bigger model with a larger context window solves the problem. It doesn’t.

Researchers and practitioners have documented what happens when you simply expand context: performance degrades under real workloads, retrieval becomes expensive, and costs compound. This phenomenon — sometimes called “context rot” — occurs because an enlarged context window filled indiscriminately with information hurts reasoning quality. The model spends its attention budget on noise rather than signal.

Memory is fundamentally a systems architecture problem: deciding what to store, where to store it, when to retrieve it, and, more importantly, what to forget. None of those decisions can be delegated to the model itself without explicit design. IBM’s overview of AI agent memory makes an important point: unlike simple reflex agents, which don’t need memory at all, agents handling complex goal-oriented tasks require memory as a core architectural component, not an afterthought.

The practical implication is to design your memory layer the way you’d design any production data system. Think about write paths, read paths, indexes, eviction policies, and consistency guarantees before writing a single line of agent code.

Further reading: What Is AI Agent Memory? – IBM Think and What Is Agent Memory? A Guide to Enhancing AI Learning and Recall | MongoDB

Step 2: Learning the AI Agent Memory Type Taxonomy

Cognitive science gives us a vocabulary for the distinct roles memory plays in intelligent systems. Applied to AI agents, we can roughly identify four types, and each maps to a concrete architectural decision.

Short-term or working memory is the context window — everything the model can actively reason over in a single inference call. It includes the system prompt, conversation history, tool outputs, and retrieved documents. Think of it like RAM: fast and immediate, but wiped when the session ends. It’s typically implemented as a rolling buffer or conversation history array, and it’s sufficient for simple single-session tasks but cannot survive across sessions.

Episodic memory records specific past events, interactions, and outcomes. When an agent recalls that a user’s deployment failed last Tuesday due to a missing environment variable, that’s episodic memory at work. It’s particularly effective for case-based reasoning — using past events, actions, and outcomes to improve future decisions. Episodic memory is commonly stored as timestamped records in a vector database and retrieved via semantic or hybrid search at query time.

Semantic memory holds structured factual knowledge: user preferences, domain facts, entity relationships, and general world knowledge relevant to the agent’s scope. A customer service agent that knows a user prefers concise answers and operates in the legal industry is drawing on semantic memory. This is often implemented as entity profiles updated incrementally over time, combining relational storage for structured fields with vector storage for fuzzy retrieval.

Procedural memory encodes how to do things — workflows, decision rules, and learned behavioral patterns. In practice, this shows up as system prompt instructions, few-shot examples, or agent-managed rule sets that evolve through experience. A coding assistant that has learned to always check for dependency conflicts before suggesting library upgrades is expressing procedural memory.

These memory types don’t operate in isolation. Capable production agents often need all of these layers working together.

Further reading: Beyond Short-term Memory: The 3 Types of Long-term Memory AI Agents Need and Making Sense of Memory in AI Agents by Leonie Monigatti

Step 3: Knowing the Difference Between Retrieval-Augmented Generation and Memory

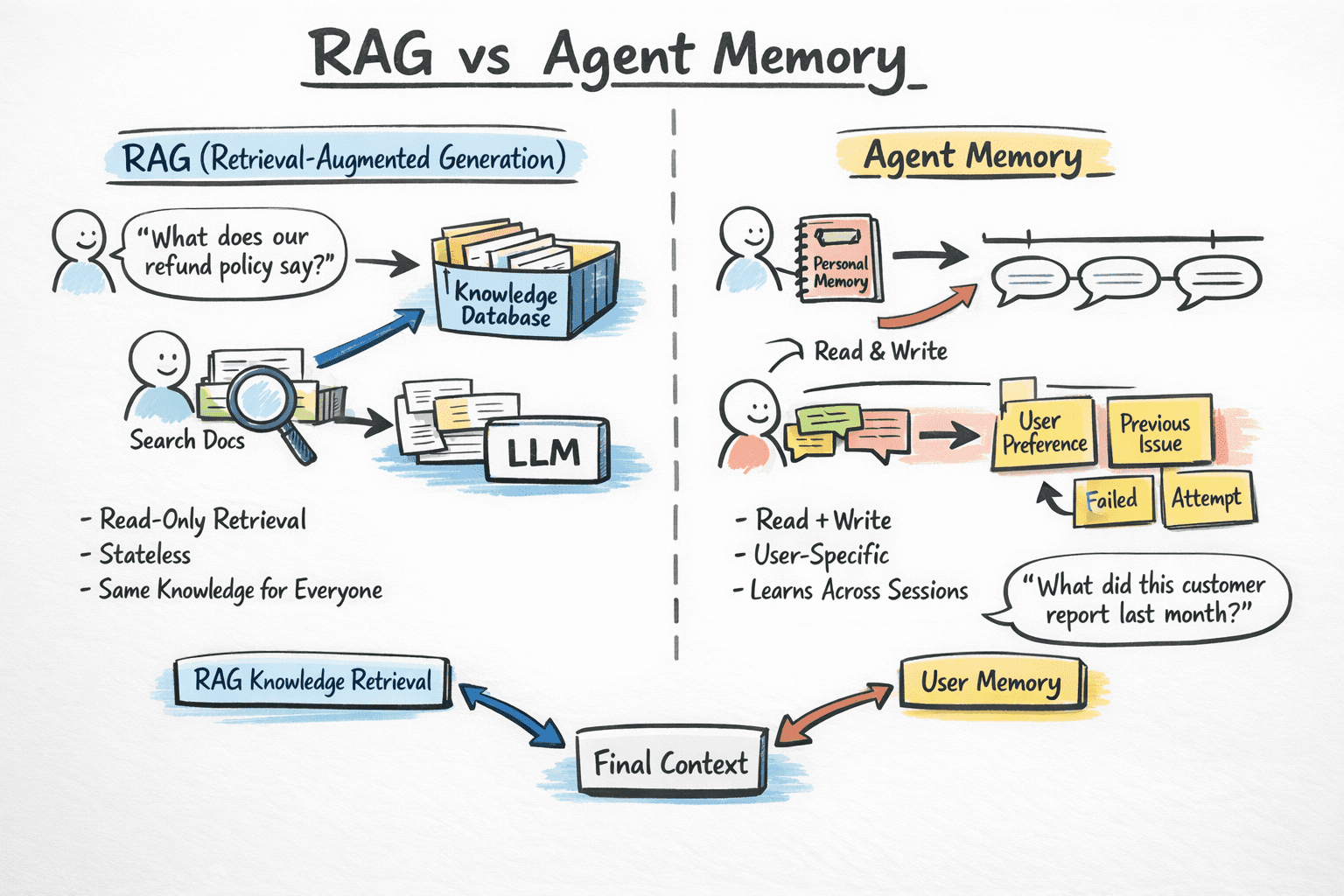

One of the most persistent sources of confusion for developers building agentic systems is conflating retrieval-augmented generation (RAG) with agent memory.

⚠️ RAG and agent memory solve related but distinct problems, and using the wrong one for the wrong job leads to agents that are either over-engineered or systematically blind to the right information.

RAG is fundamentally a read-only retrieval mechanism. It grounds the model in external knowledge — your company’s documentation, a product catalog, legal policies — by finding relevant chunks at query time and injecting them into context. RAG is stateless: each query starts fresh, and it has no concept of who is asking or what they’ve said before. It’s the right tool for “what does our refund policy say?” and the wrong tool for “what did this specific customer tell us about their account last month?”

Memory, by contrast, is read-write and user-specific. It enables an agent to learn about individual users across sessions, recall what was attempted and failed, and adapt behavior over time. The key distinction here is that RAG treats relevance as a property of content, while memory treats relevance as a property of the user.

RAG vs Agent Memory | Image by Author

Here’s a practical approach: use RAG for universal knowledge, or things true for everyone, and memory for user-specific context, or things true for this user. Most production agents benefit from both running in parallel, each contributing different signals to the final context window.

Further reading: RAG vs. Memory: What AI Agent Developers Need to Know | Mem0 and The Evolution from RAG to Agentic RAG to Agent Memory by Leonie Monigatti

Step 4: Designing Your Memory Architecture Around Four Key Decisions

Memory architecture must be designed upfront. The choices you make about storage, retrieval, write paths, and eviction interact with every other part of your system. Before you build, answer these four questions for each memory type:

1. What to Store?

Not everything that happens in a conversation deserves persistence. Storing raw transcripts as retrievable memory units is tempting, but it produces noisy retrieval.

Instead, distill interactions into concise, structured memory objects — key facts, explicit user preferences, and outcomes of past actions — before writing them to storage. This extraction step is where most of the real design work happens.

2. How to Store It?

There are many ways to do this. Here are four primary representations, each with its own use cases:

- Vector embeddings in a vector database enable semantic similarity retrieval; they are ideal for episodic and semantic memory where queries are in natural language

- Key-value stores like Redis offer fast, precise lookup by user or session ID; they are well-suited for structured profiles and conversation state

- Relational databases offer structured querying with timestamps, TTLs, and data lineage; they are useful when you need memory versioning and compliance-grade auditability

- Graph databases represent relationships between entities and concepts; this is useful for reasoning over interconnected knowledge, but it is complex to maintain, so reach for graph storage only once vector + relational becomes a bottleneck

3. How to Retrieve It?

Match retrieval strategy to memory type. Semantic vector search works well for episodic and unstructured memories. Structured key lookup works better for profiles and procedural rules. Hybrid retrieval — combining embedding similarity with metadata filters — handles the messy middle ground that most real agents need. For example, “what did this user say about billing in the last 30 days?” requires both semantic matching and a date filter.

4. When (and How) to Forget What You’ve Stored?

Memory without forgetting is as problematic as no memory at all. Be sure to design the deletion path before you need it.

Memory entries should carry timestamps, source provenance, and explicit expiration conditions. Implement decay strategies so older, less relevant memories don’t pollute retrieval as your store grows.

Here are two practical approaches: weight recent memories higher in retrieval scoring, or use native TTL or eviction policies in your storage layer to automatically expire stale data.

Further reading: How to Build AI Agents with Redis Memory Management – Redis and Vector Databases vs. Graph RAG for Agent Memory: When to Use Which.

Step 5: Treating the Context Window as a Constrained Resource

Even with a robust external memory layer, everything flows through the context window — and that window is finite. Stuffing it with retrieved memories doesn’t guarantee better reasoning. Production experience consistently shows that it often makes things worse.

There are a few different failure modes, of which the following two are the most prevalent as context grows:

Context poisoning occurs when incorrect or stale information enters the context. Because agents build upon prior context across reasoning steps, these errors can compound silently.

Context distraction occurs when the model is burdened with too much information and defaults to repeating historical behavior rather than reasoning freshly about the current problem.

Managing this scarcity requires deliberate engineering. You’re deciding not just what to retrieve, but also what to exclude, compress, and prioritize. Here are a few principles that hold across frameworks:

- Score by recency and relevance together. Pure similarity retrieval surfaces the most semantically similar memory, not necessarily the most useful one. A proper retrieval scoring function should combine semantic similarity, recency, and explicit importance signals. This is necessary for a critical fact to surface over a casual preference, even if the critical memory is older.

- Compress, don’t just drop. When conversation history grows long, summarize older exchanges into concise memory objects rather than truncating them. Key facts should survive summarization; low-signal filler should not.

- Reserve tokens for reasoning. An agent that fills 90% of its context window with retrieved memories will produce lower-quality outputs than one with room to think. This matters most for multi-step planning and tool-use tasks.

- Filter post-retrieval. Not every retrieved document should enter the final context. A post-retrieval filtering step — scoring retrieved candidates against the immediate task — significantly improves output quality.

The MemGPT research, now productized as Letta, offers a useful mental model: treat the context window as RAM and external storage as disk, and give the agent explicit mechanisms to page information in and out on demand. This shifts memory management from a static pipeline decision into a dynamic, agent-controlled operation.

Further reading: How Long Contexts Fail, Context Engineering Explained in 3 Levels of Difficulty, and Agent Memory: How to Build Agents that Learn and Remember | Letta.

Step 6: Implementing Memory-Aware Retrieval Inside the Agent Loop

Retrieval that fires automatically before every agent turn is suboptimal and expensive. A better pattern is to give the agent retrieval as a tool — an explicit function it can invoke when it recognizes a need for past context, rather than receiving a pre-populated dump of memories whether or not they are relevant.

This mirrors how effective human memory works: we don’t replay every memory before every action, but we know when to stop and recall. Agent-controlled retrieval produces more targeted queries and fires at the right moment in the reasoning chain. In ReAct-style frameworks (Thought → Action → Observation), memory lookup fits naturally as one of the available tools. After observing a retrieval result, the agent evaluates its relevance before incorporating it. This is a form of online filtering that meaningfully improves output quality.

For multi-agent systems, shared memory introduces additional complexity. Agents can read stale data written by a peer or overwrite each other’s episodic records. Design shared memory with explicit ownership and versioning:

- Which agent is the authoritative writer for a given memory namespace?

- What is the consistency model when two agents update overlapping records simultaneously?

These are questions to answer in design, not questions to try to answer during production debugging.

A practical starting point: begin with a conversation buffer and a basic vector store. Add working memory — explicit reasoning scratchpads — when your agent does multi-step planning. Add graph-based long-term memory only when relationships between memories become a bottleneck for retrieval quality. Premature complexity in memory architecture is one of the most common ways teams slow themselves down.

Further reading: AI Agent Memory: Build Stateful AI Systems That Remember – Redis and Building Memory-Aware Agents by DeepLearning.AI.

Step 7: Evaluating Your Memory Layer Deliberately and Improving Continuously

Memory is one of the hardest components of an agentic system to evaluate because failures are often invisible. The agent produces a plausible-sounding answer, but it’s grounded in a stale memory, a retrieved-but-irrelevant chunk, or a missing piece of episodic context the agent should have had. Without deliberate evaluation, these failures stay hidden until a user notices.

Define memory-specific metrics. Beyond task completion rate, track metrics that isolate memory behavior:

- Retrieval precision: are retrieved memories relevant to the task?

- Retrieval recall: are important memories being surfaced?

- Context utilization: are retrieved memories actually being used by the model, or ignored?

- Memory staleness: how often does the agent rely on outdated facts?

AWS’s benchmarking work with AgentCore Memory evaluated against datasets like LongMemEval and LoCoMo specifically to measure retention across multi-session conversations. That level of rigor should be the benchmark for production systems.

Build retrieval unit tests. Before evaluating end-to-end, build a retrieval test suite: a curated set of queries paired with the memories they should retrieve. This isolates memory layer problems from reasoning problems. When agent behavior degrades in production, you’ll quickly know whether the root cause is retrieval, context injection, or model reasoning over what was retrieved.

Also monitor memory growth. Production memory systems accumulate data continuously. Retrieval quality degrades as stores grow because more candidate memories mean more noise in retrieved sets. Monitor retrieval latency, index size, and result diversity over time. Plan for periodic memory audits — identifying outdated, duplicate, or low-quality entries and pruning them.

Use production corrections as training signals. When users correct an agent, that correction is a label: either the agent retrieved the wrong memory, had no relevant memory, or had the right memory but didn’t use it. Closing this feedback loop — treating user corrections as systematic input to retrieval quality improvement — is one of the most helpful sources of information available to production agent teams.

Know your tooling. A growing ecosystem of purpose-built frameworks now handles the difficult infrastructure. Here are some AI agent memory frameworks you can look at:

- Mem0 provides intelligent memory extraction with built-in conflict resolution and decay

- Letta implements an OS-inspired tiered memory hierarchy

- Zep extracts entities and facts from conversations into structured format

- LlamaIndex Memory offers composable memory modules integrated with query engines

Starting with one of the available frameworks rather than building your own from scratch can save significant time.

Further reading: Building Smarter AI Agents: AgentCore Long-Term Memory Deep Dive – AWS and The 6 Best AI Agent Memory Frameworks in 2026.

Wrapping Up

As you can see, memory in agentic systems isn’t something you set up once and forget. The tooling in this space has improved a lot. Purpose-built memory frameworks, vector databases, and hybrid retrieval pipelines make it more practical to implement robust memory today than it was a year ago.

But the core decisions still matter: what to store, what to ignore, how to retrieve it, and how to use it without wasting context. Good memory design comes down to being intentional about what gets written, what gets removed, and how it is used in the loop.

| Step | Objective |

|---|---|

| Understanding Why Memory Is a Systems Problem | Treat memory as an architecture problem, not a bigger-context-window problem; decide what to store, retrieve, and forget like you would in any production data system. |

| Learning the AI Agent Memory Type Taxonomy | Understand the four main memory types—working, episodic, semantic, and procedural—so you can map each one to the right implementation strategy. |

| Knowing the Difference Between Retrieval-Augmented Generation and Memory | Use RAG for shared external knowledge and memory for user-specific, read-write context that helps the agent learn across sessions. |

| Designing Your Memory Architecture Around Four Key Decisions | Design memory intentionally by deciding what to store, how to store it, how to retrieve it, and when to forget it. |

| Treating the Context Window as a Constrained Resource | Keep the context window focused by prioritizing relevant memories, compressing old information, and filtering noise before it reaches the model. |

| Implementing Memory-Aware Retrieval Inside the Agent Loop | Let the agent retrieve memory only when needed, treat retrieval as a tool, and avoid adding unnecessary complexity too early. |

| Evaluating Your Memory Layer Deliberately and Improving Continuously | Measure memory quality with retrieval-specific metrics, test retrieval behavior directly, and use production feedback to keep improving the system. |

Agents that use memory well tend to perform better over time. Those are the systems worth focusing on. Happy learning and building!