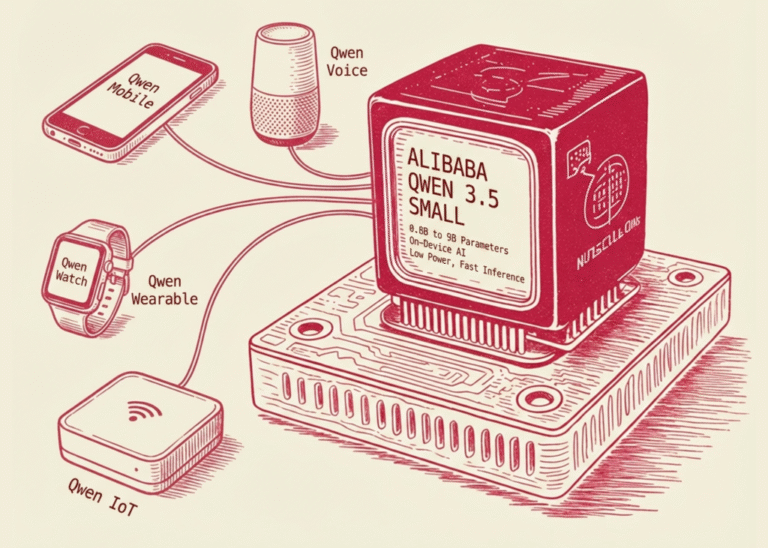

Alibaba just released Qwen 3.5 Small models: a family of 0.8B to 9B parameters built for on-device applications

Alibaba’s Qwen team has released the Qwen3.5 Small Model Series, a collection of Large Language Models (LLMs) ranging from 0.8B to 9B parameters. While the industry trend has historically favored increasing parameter counts to achieve ‘frontier’ performance, this release focuses…