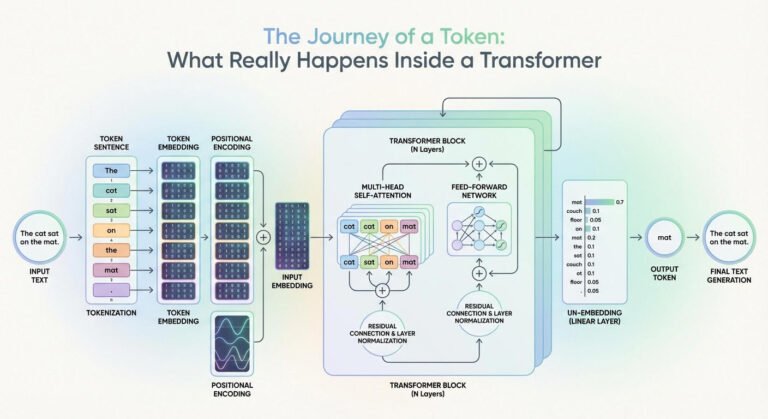

Training a Tokenizer for BERT Models

BERT is an early transformer-based model for NLP tasks that’s small and fast enough to train on a home computer. Like all deep learning models, it requires a tokenizer to convert text into integer tokens. This article shows how to…