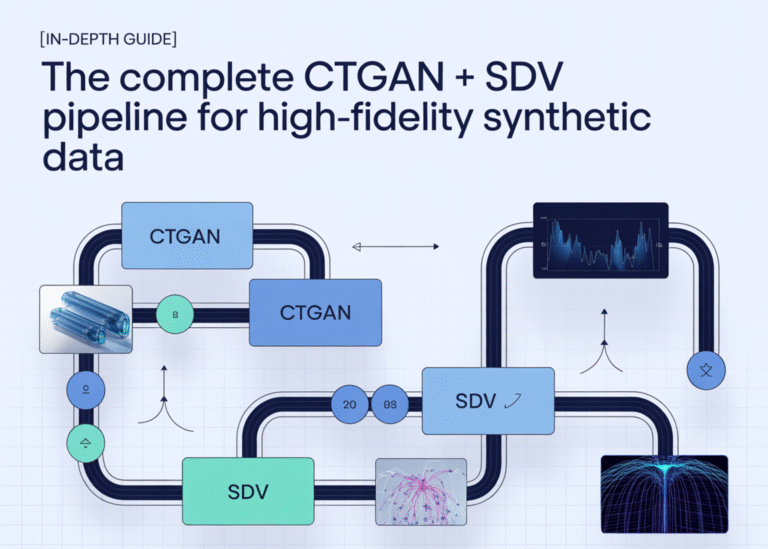

[In-Depth Guide] The Complete CTGAN + SDV Pipeline for High-Fidelity Synthetic Data

metadata_dict = metadata.to_dict() diagnostic = DiagnosticReport() diagnostic.generate(real_data=real, synthetic_data=synthetic_sdv, metadata=metadata_dict, verbose=True) print(“Diagnostic score:”, diagnostic.get_score()) quality = QualityReport() quality.generate(real_data=real, synthetic_data=synthetic_sdv, metadata=metadata_dict, verbose=True) print(“Quality score:”, quality.get_score()) def show_report_details(report, title): print(f”\n===== {title} details =====”) props = report.get_properties() for p in props: print(f”\n— {p} —“)…