The Complete Guide to Data Augmentation for Machine Learning

Suppose you’ve built your machine learning model, run the experiments, and stared at the results wondering what went wrong. Source link

Suppose you’ve built your machine learning model, run the experiments, and stared at the results wondering what went wrong. Source link

I have been building a payment platform using vibe coding, and I do not have a frontend background. Source link

This article is divided into four parts; they are: • The Reason for Fine-tuning a Model • Dataset for Fine-tuning • Fine-tuning Procedure • Other Fine-Tuning Techniques Once you train your decoder-only transformer model, you have a text generator. Source…

If you’ve trained a machine learning model, a common question comes up: “How do we actually use it?” This is where many machine learning practitioners get stuck. Source link

Editor’s note: This article is a part of our series on visualizing the foundations of machine learning. Source link

Large language models like LLaMA, Mistral, and Qwen have billions of parameters that demand a lot of memory and compute power. Source link

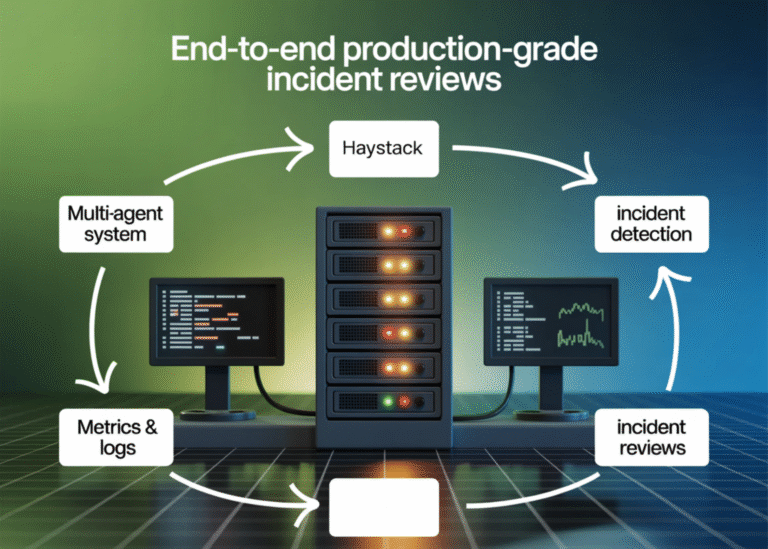

@tool def sql_investigate(query: str) -> dict: try: df = con.execute(query).df() head = df.head(30) return { “rows”: int(len(df)), “columns”: list(df.columns), “preview”: head.to_dict(orient=”records”) } except Exception as e: return {“error”: str(e)} @tool def log_pattern_scan(window_start_iso: str, window_end_iso: str, top_k: int = 8) ->…

Most forecasting work involves building custom models for each dataset — fit an ARIMA here, tune an LSTM there, wrestle with <a href=" Source link

Finishing Andrew Ng’s machine learning course <a href=" Source link

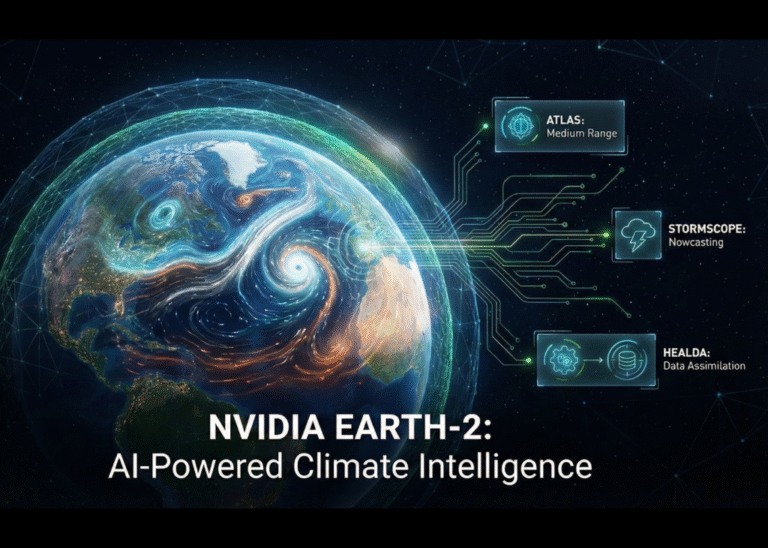

For decades, predicting the weather has been the exclusive domain of massive government supercomputers running complex physics-based equations. NVIDIA has shattered that barrier with the release of the Earth-2 family of open models and tools for AI weather and climate…