A Step-by-Step Implementation Tutorial for Building Modular AI Workflows Using Anthropic’s Claude Sonnet 3.7 through API and LangGraph

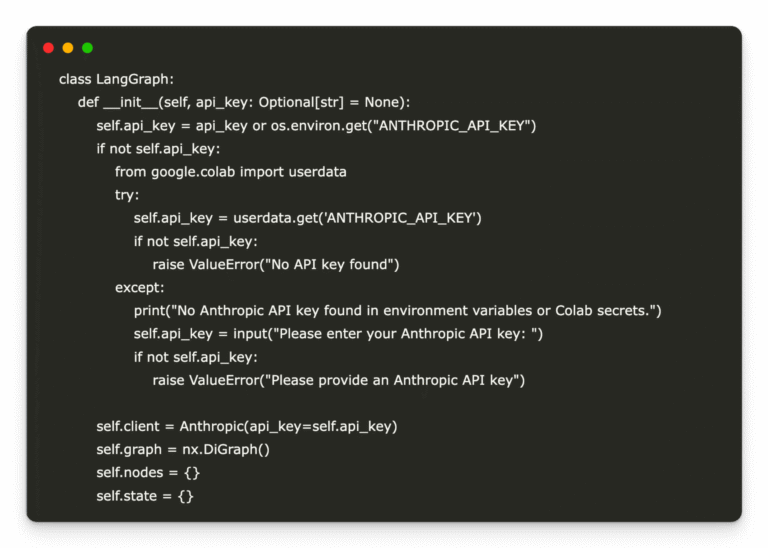

In this tutorial, we provide a practical guide for implementing LangGraph, a streamlined, graph-based AI orchestration framework, integrated seamlessly with Anthropic’s Claude API. Through detailed, executable code optimized for Google Colab, developers learn how to build and visualize AI workflows…